- 👓 View today's article

- 🍿 Tune into the AI Show

- 🗞️ AiMonthly Newsletter

- 🌤️ Continue the Azure AI Cloud Skills Challenge

- 🏫 Bookmark the Azure AI Technical Community

- 🌏 Join the Global AI Community

- 💡 Suggest a topic for a future post

Please share

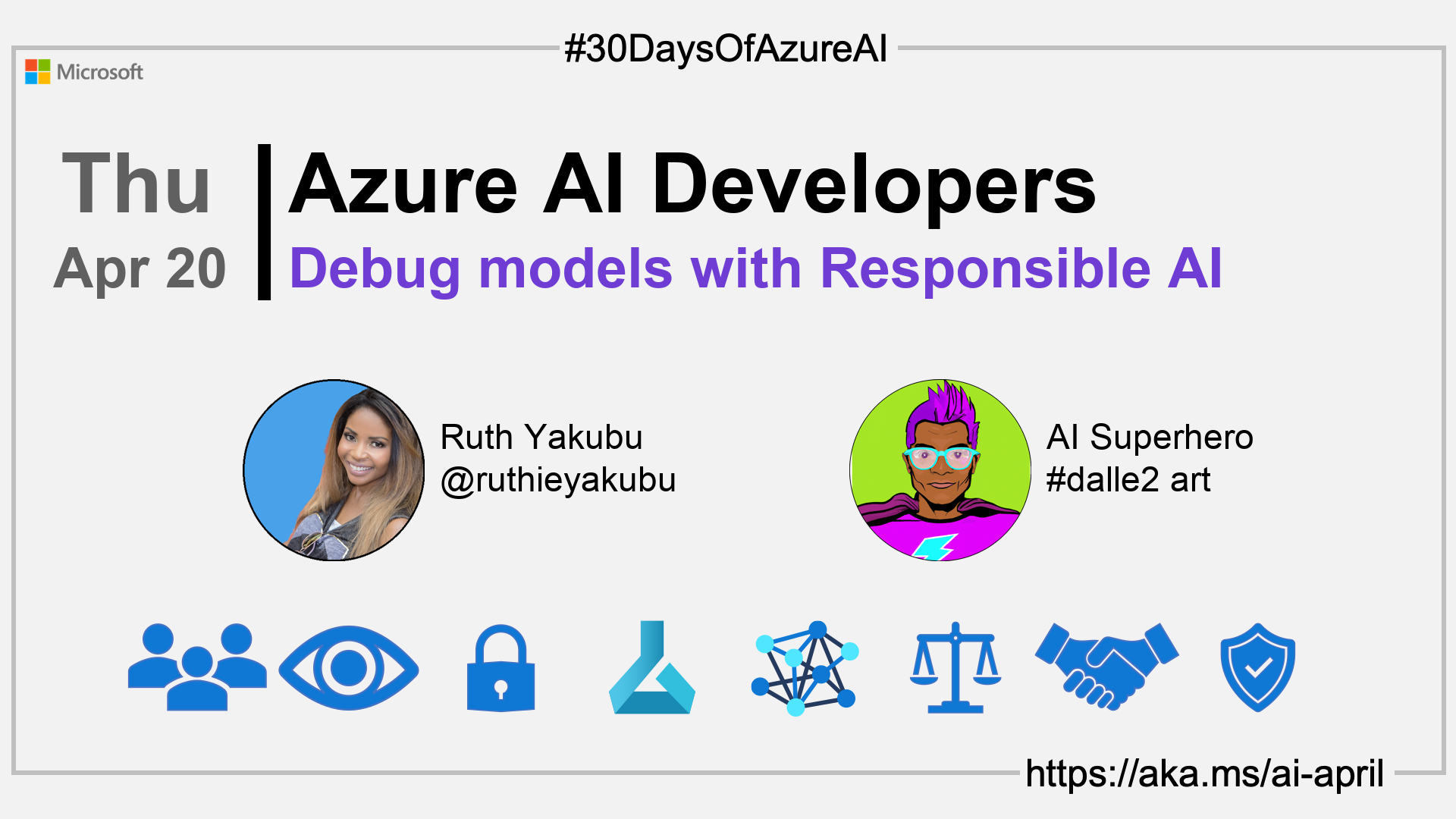

🗓️ Day 19 of #30DaysOfAzureAI

Guide to analyzing ML models for Responsible AI issues (Part 1)

Yesterday we learned how to deploy ML models using Azure ML managed online endpoints. In the "Fundamentals" week we learned about the importance of Responsible AI. Today, we get practical, you'll learn about the Azure ML Responsible AI Dashboard and how it can help you build fairer ML models.

🎯 What we'll cover

- The Azure ML RAI Dashboard.

- Build fairer and responsible AI models.

- Tools for responsible AI development, including model interoperability, error analysis, and counterfactual analysis.

📚 References

- Microsoft's approach to using AI responsibly

- Meeting the AI moment: advancing the future through responsible AI

🚌 What is Responsible AI Dashboard?

Today's article is about the Responsible AI (RAI) Dashboard is a suite of open-source tools that help developers create responsible AI models with features such as model statistics, data explorer, error analysis, model interpretability, counterfactual analysis, and causal inference. The dashboard is built on leading open-source tools such as ErrorAnalysis, InterpretML, Fairlearn, DiCE, and EconML, and it can be accessed through the Azure Machine Learning platform. The RAI components allow developers to troubleshoot and analyze models and make better decisions to produce more responsible AI systems.

👓 View today's article

Today's article.

🙋🏾♂️ Questions?

You can ask questions about this post on GitHub Discussions

📍 30 days roadmap

What's next? View the #30DaysOfAzureAI Roadmap